Data Processing

Data processing is the systematic series of actions applied to raw data, transforming it into structured, actionable information for analysis, reporting, and de...

Data transfer is the process of relocating, copying, or transmitting data between digital environments, critical for continuity, analytics, and compliance.

Data transfer, also known as data movement, is the comprehensive process of relocating, copying, or transmitting data from one digital environment to another. This includes activities such as migrating data between storage devices, transferring records among databases and servers, synchronizing data between on-premises and cloud platforms, and streaming information across applications and geographic boundaries. Data transfer is foundational to modern information technology, supporting everything from operational continuity to large-scale analytics and regulatory compliance.

Whether moving structured data from a relational database, unstructured files from a distributed file system, or time-series sensor data from IoT devices, data transfer underpins critical business and operational processes. It enables cross-functional teams to access up-to-date information, ensures the resilience of IT infrastructure through redundancy, and supports multi-cloud and hybrid cloud deployments by facilitating seamless data flow between diverse environments. As data volumes continue to grow exponentially, efficient and secure data transfer strategies are essential for scaling operations, optimizing costs, and meeting evolving regulatory requirements.

The movement of data is integral to operational efficiency, business agility, and digital transformation. Seamless access to information ensures that stakeholders—from decision-makers to automated systems—operate with accurate, real-time data. This is especially critical in distributed organizations, where data may reside in multiple data centers, cloud platforms, or edge devices.

To maintain data integrity and consistency, data movement solutions incorporate validation, error checking, and reconciliation processes—ensuring data remains accurate and up-to-date.

Data movement encompasses a spectrum of activities, each serving a unique role in an organization’s data strategy:

Data movement differs from data flow, which refers to the logical path and processing sequence data takes through a system.

Data migration is the systematic process of moving data between environments, applications, or storage media. Common during IT modernization, cloud adoption, or decommissioning of legacy systems, migration involves discovery, mapping, transformation, validation, and execution. Change in structure, format, or encoding may be required, and robust recovery mechanisms minimize risk.

Replication copies and maintains datasets across multiple systems or locations. It enhances availability and fault tolerance, using synchronous or asynchronous strategies. Database replication (e.g., Oracle Data Guard, SQL Server Always On) supports high-availability and disaster recovery, while cloud architectures leverage replication for compliance and low latency.

Synchronization maintains consistent and up-to-date data across systems. Change Data Capture (CDC) identifies and propagates only changes, enabling near real-time consistency. Tools like Oracle GoldenGate, AWS DMS, and Debezium provide robust CDC capabilities.

Data integration combines data from diverse sources for unified analysis or operational use. Solutions provide connectors, transformations, and cleansing for a consistent dataset—crucial for breaking down data silos and enabling analytics.

Data streaming is the real-time transfer and processing of event data. Platforms like Apache Kafka and Amazon Kinesis enable organizations to ingest, process, and analyze data on-the-fly, supporting instant responses and real-time insights.

Ingestion collects and imports data from multiple sources into centralized storage systems (data lakes, warehouses). Tools like Logstash, AWS Glue, and Google Cloud Dataflow offer robust ingestion pipelines for scalable analytics.

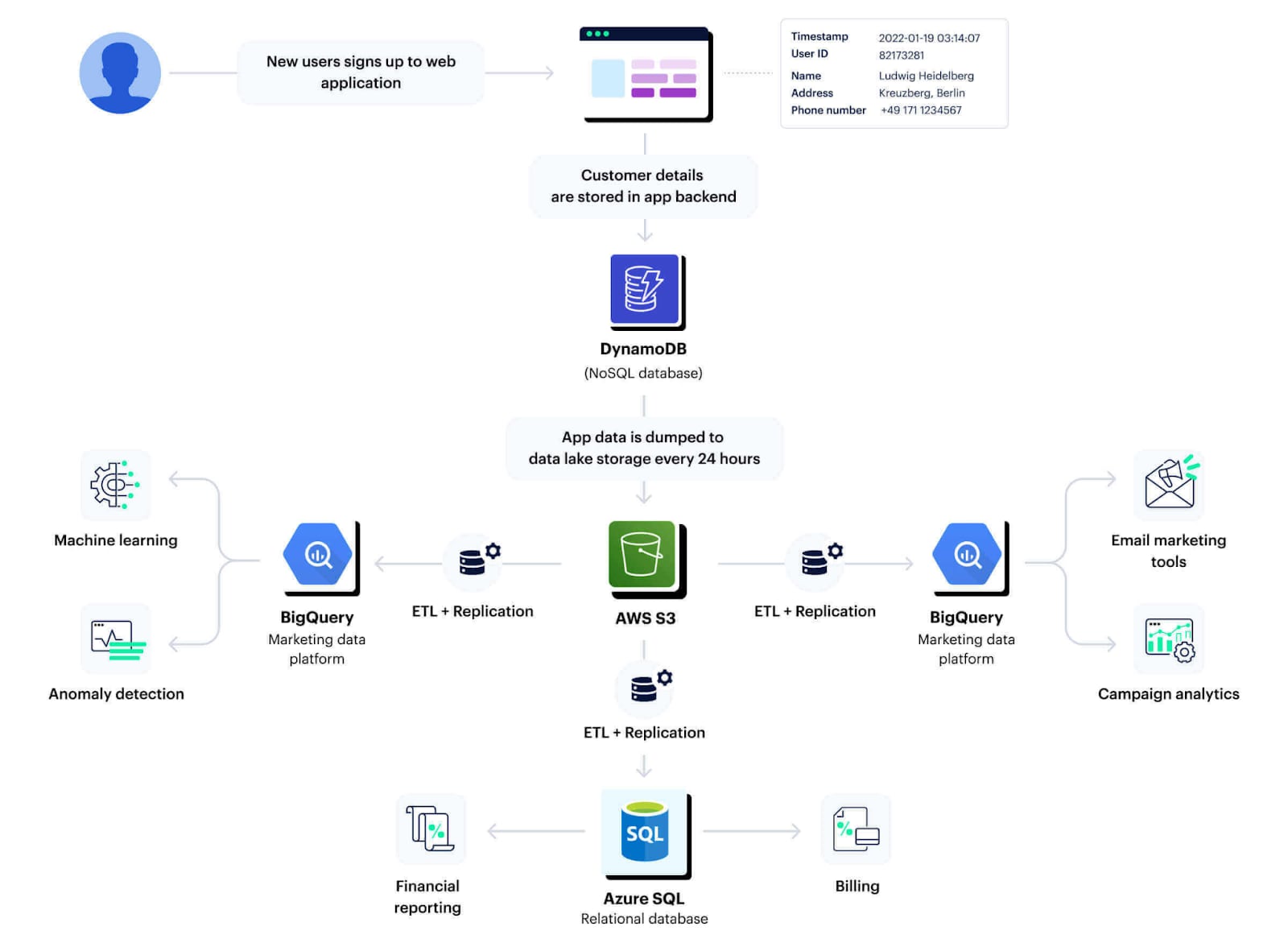

ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) are methodologies for preparing and moving data from sources to targets, typically for analytics. ETL transforms data before loading; ELT loads data before transforming it in the target system. Both are orchestrated using modern data pipeline tools.

Reverse ETL moves data from analytical stores back into operational systems, so business applications can leverage up-to-date insights for day-to-day operations.

Protocols define the rules for exchanging data between systems:

ICAO and industry guidelines require secure, authenticated protocols, encryption in transit, and detailed logging.

A robust ecosystem of tools supports data movement, tailored to specific needs:

Selection is based on compatibility, security, scalability, and ease of use.

In aviation, the International Civil Aviation Organization (ICAO) prescribes strict protocols for data movement—emphasizing data integrity, traceability, encryption, and validation. These standards ensure safety, reliability, and compliance in handling operational, maintenance, and regulatory data. Similar rigor applies in healthcare, finance, and other regulated sectors.

Data transfer (movement) is a strategic enabler of digital business, supporting resilience, agility, and compliance. As organizations modernize IT infrastructure and scale operations, robust, secure, and efficient data movement strategies are critical for business success.

For expert guidance on data transfer solutions, reach out to our team or book a demo to see how your organization can benefit from modern, automated data movement.

Data transfer, also known as data movement, is the process of moving, copying, or transmitting data between digital environments—such as databases, storage systems, cloud platforms, or applications. It includes activities like migration, replication, synchronization, integration, streaming, and ingestion, ensuring that data is accessible, consistent, and secure across diverse systems.

Data movement is critical for ensuring business continuity, enabling cross-platform analytics, supporting disaster recovery, complying with regulations, and powering digital transformation. It allows organizations to leverage up-to-date information, integrate legacy and modern systems, and quickly recover from disruptions.

The main types are migration (moving data between systems), replication (creating copies for high availability), synchronization (maintaining consistency across systems), integration (combining data from multiple sources), streaming (real-time data flow), ingestion (consolidating data into central stores), ETL/ELT (extract, transform, load), and reverse ETL (moving data from analytics platforms into operational systems).

Challenges include ensuring data security and privacy, maintaining data integrity and consistency, minimizing downtime during migration, handling large volumes and velocities of data, managing schema or format changes, conflict resolution in distributed environments, and complying with industry regulations.

Protocols include SFTP, HTTPS, SMB, NFS, and proprietary cloud APIs, which ensure encrypted and authenticated transfers. Common tools include AWS DMS, Oracle GoldenGate, Talend, Informatica, Apache Kafka, Fivetran, and many others, each tailored to specific data movement needs such as replication, integration, streaming, and migration.

Ready to modernize your data transfer solutions? Improve resilience, efficiency, and compliance with secure, automated data movement across platforms and clouds.

Data processing is the systematic series of actions applied to raw data, transforming it into structured, actionable information for analysis, reporting, and de...

Data integration merges data from disparate sources into a unified, consistent, and accessible format for analytics, operations, and reporting. It's vital in av...

Data management is the systematic practice of collecting, storing, organizing, securing, and utilizing data. It ensures data is accurate, accessible, and protec...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.